Before discussing about the different types of power management techniques, let us first look into the various degrees of freedom associated with power dissipation.

Since switching power dissipation is a major component of dynamic power dissipation (Pdyn), we can say the Pdyn ∝ α.(Vdd)2.CL.f. So we can reduce the dynamic power dissipation by reducing any of these parameters listed below –

α = switching activity

Vdd = supply voltage

CL = total load capacitance

f = frequency of operation

Similarly as discussed earlier, static power dissipation, Psta ∝ 1/Vth.

Vth = threshold voltage of the transistors

It is important to note one more thing, the delay associated with transistors operation is dependent on supply voltage (Vdd) and threshold voltage (Vth), as shown below –

Although High Vth transistors are better in terms of saving static power, it introduces more delay in the circuit operation, ultimately affecting the performance.

Now let us discuss about few common power management techniques in brief.

1. Multi Vth Design

As the name suggests, inside the design we use standard cells of different threshold voltage (Vth). As discussed above the Vth can affect the performance of the design, thus a trade-off is done between the performance and power depending upon the requirement.

Broadly the standard cells can be classified into 3 different categories –

• HVT cells – Standard cells made up of transistors having high Vth. These cells consumes less power but are slow. These can be used in path where timing is not critical thus we can afford to introduce delay while saving static power.

• LVT cells – Standard cells made up of transistors having low Vth. These cells are fast but consumes more power. These are used in timing critical path.

• SVT cells – Standard cells made up of transistors having medium Vth. It offers a trade-off between HVT and LVT, thus is consumes less power than LVT cells but are faster than HVT cells. These can be used when we are not able to meet the timing by a small margin.

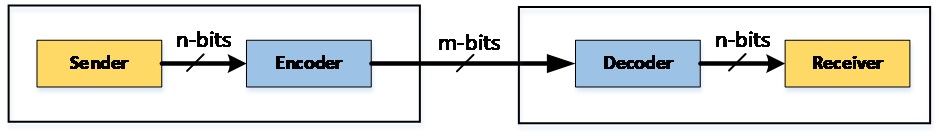

2. Bus Encoding

A considerable amount of power is dissipated for transmission of data over the system level buses. It is possible to save a significant amount of power by reducing the number of transition, i.e. switching activity at the I/O interface. It is possible to suitably encode the data before sending over the I/O interface and a decoder can be used to get back the original data at the receiving end.

Coding scheme can be broadly divided into two categories –

2.1. Non-redundant: Here an n-bit code is translated into another n-bit code, thus 2n code elements of n-bit are mapped among themselves. Referring the Figure 1, in this case m=n. This scheme is useful only when data sent over the bus is in sequence.

Example – Gray coding

It results in reduction of switching activity only when the data is sequential and highly correlated like in an instruction address bus. As shown above the number of transition is limited to 1 for sequential data. For random data, like in data bus, the number of transitions for binary and gray code are approximately equal.

2.2. Redundant: Here an n-bit code is translated into m-bit code (where m > n), thus 2n code elements of n-bit are mapped to a larger set of 2m elements. Unlike non-redundant coding scheme, this is useful even when the data sent over the bus are not in sequence.

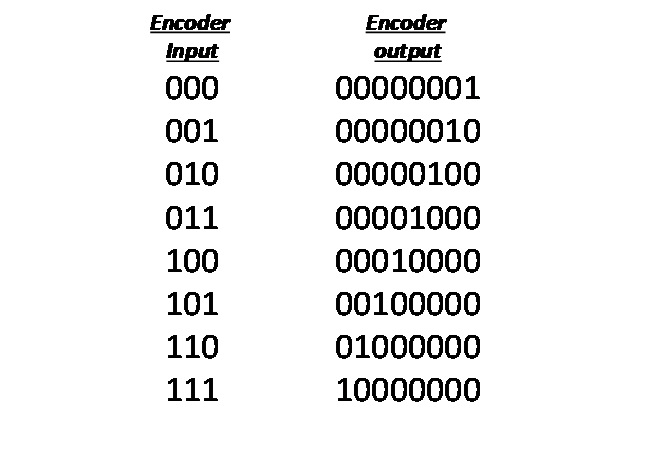

Example – One hot coding

It always results in reduction in switching activity. The number of transitions is always 2. But one hot coding is not suitable for large bus size as the number of wire required increases exponentially with word size of the data ( as shown above a 3 bit data bus requires 8 bit bus at the encoder output). However there are other redundant coding techniques that can overcome this problem but we are not going to discuss about that in this article.

3. Hardware Software Tradeoff

Whenever we start designing a system first we identify which part to be implemented by HW and which one by SW, then we do system integration. Some functionality can be either realized by HW or SW or by a combination of both. Example – ADC, encryption-decryption, compression-decompression etc.

HW based approach – faster, costlier, consumes more power

SW based approach – slower, cheaper, consumes less power

4. Multiple Vdd design

As mentioned earlier the power dissipation has a strong dependency on the supply voltage. Thus lower supply voltage implies less power consumption. But delay is inversely proportional to Vdd, thus we have to take that into consideration while scaling the voltage.

4.1. Static Voltage Scaling

In a design, different blocks can work on different voltages, and the lower the voltage of a block, the less power it is likely to consume; therefore, it is imperative to create multiple voltage domains. To support this, typically voltage regulators are used to create different supplies from one source supply. IPs operating on one particular voltage will be put in the respective voltage island.

4.2. Dynamic Voltage and Frequency Scaling (DVFS)

DVFS is a technique used to optimize power consumption in differing workload scenarios. Consider an example of a CPU, whose work load is a time varying function which heavily depends on the application you are running. Although work load is varying, we are maintaining a fixed voltage and frequency to it, thus a fixed power dissipation takes place all the time. DVFS basically adjusts the voltage and frequency depending upon the work load.

In other words, the IP is designed in such a way that it does not consume fixed power all the time; instead, the power consumption depends on the performance level the IP is operating on. To implement this, in the IP design various performance modes are created. Each of the performance modes has an associated operating frequency and each of the operating frequencies has an associated voltage requirement; therefore, depending on the workload (and thereby the performance requirement), the system software can choose the operating mode.

5. Clock Gating

Consider a simple example, let’s say a flop is not switching states for considerable period of time. Since the clock to it is switching continuously, the clock tree buffers are switching states and hence consuming power. Also the flops are made up of latches, thus even though the input and output of the flop is not switching, some part of latch is switching and consuming power. In such scenarios, power is dissipated unnecessarily.

It has been found that approximately 50% of the dynamic power dissipation is due to clock related circuit. One of the most commonly used low power technique is clock gating (CG). Fundamentally clock gating means stopping the clock to a logic block when the operations of that block are not needed (or the inputs to the block are not changing). Thus only leakage power dissipation takes place when a circuit/block is clock gated.

6. Power Gating

A logical extension to clock gating is power gating, in which the power or supply voltage to circuit blocks not in use are temporarily turned off. Typically the supply voltage is cut off by logic equivalent to switch, controlled by the Power Management Unit. Power gating is possible by realizing multiple power domains in the design as discussed here. Power gating saves the leakage power in addition to dynamic power.

Now you must be wondering why we need clock gating, as power gating is a better option. The answer to it is, the time it takes to bring up the logical block from power off to power on is significant and noticeable thus it introduces much more latency in the operation compared to clock gating.